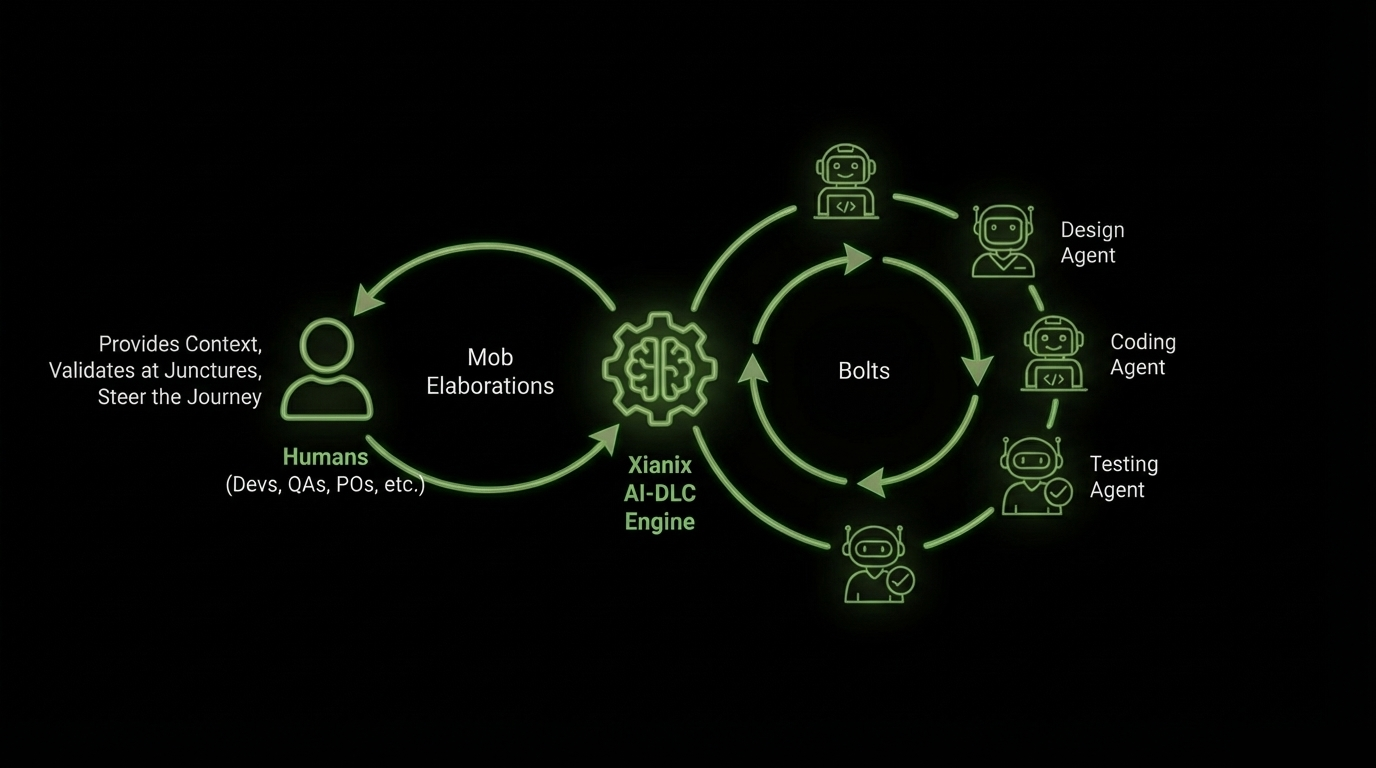

Example flow

A day in the life

One example of how work can move from backlog to production when agents handle routine analysis and humans keep the important decisions.

Creates backlog item: "As a user, I want to export reports as PDF."

Cross-references against the existing reporting module. Identifies that CSV is the only current export format. Sends precise questions to the PO about page layout preferences and chart embedding.

Answers the questions with layout specs, chart preferences, and confirms a PDF rendering library will be needed.

Updates the item with full acceptance criteria, edge cases (empty reports, large datasets, special characters), and dependency notes. Marks the item as groomed.

Pulls the groomed item into the sprint and assigns it to a developer.

Produces a spec: extend IReportExporter, add a PdfReportExporter implementation, configure page size, update the export endpoint. Flags the PDF library choice for tech lead review.

Picks up the spec. Uses AI for boilerplate and repetitive setup work, while focusing on the rendering logic and edge cases that need closer attention. Opens a PR.

Architecture checks pass. Flags one gap: the acceptance criterion for special characters in report titles isn't covered by the implementation.

Adds special character handling and pushes the fix. PR is approved.

Generates targeted test cases for the highest-risk areas: large dataset rendering, special character handling, and the new exporter interacting with the existing CSV path.

Runs the targeted tests. Finds a layout issue with wide tables and logs it for the developer to fix.

Code is merged. Updates the API docs with the new export endpoint and adds a component doc section for the PDF exporter.